Canonical

on 31 January 2016

This is part 4 of my ongoing “Deploying OpenStack on MAAS 1.9+ with Juju” series. The last post, MAAS Setup: Deploying OpenStack on MAAS 1.9+ with Juju, described the steps to deploy MAAS 1.9 itself and configure it with the fabrics, subnets, and VLANs we need to deploy OpenStack. In this post we’ll finish the MAAS setup, add to MAAS the 4 dual-NIC NUC nodes and commission them, and finally configure each node’s network interfaces as required.

Dual Zone MAAS Setup

MAAS physical-network layout for OpenStack

As I mentioned in the first post of the series, we’ll use 2 physical zones in MAAS – each containing 2 nodes (Intel NUCs) plugged into a VLAN-enabled switch. Switches are configured to trunk all VLANs on certain ports – those used to connect to the other switch or to the MAAS cluster controller. I’ve decided to use IP addresses with the following format:

10.X.Y.Z – where:

- X is either 14 (for the PXE managed subnet 10.14.0.0/20) or it’s equal to the VLAN ID the address is from (e.g. 10.150.0.100);

- Y is 0 for zone 1 and 1 for zone 2 (e.g. zone 1’s switch uses 10.14.0.2, while zone 2’s switch uses 10.14.1.2);

- Z is 1 only for the IP address of the MAAS cluster controller’s primary NIC (the default gateway), 2 for each switch’s IP address, 100 + or matches the last part of node’s hostname (when possible) – e.g. node-11’s IP in VLAN 250 will be 10.250.0.111, while node-21’s IP in VLAN 30 is 10.30.1.121.

MAAS comes with a “default” zone where all nodes end up when added. It cannot be removed, but we can easily add more using the MAAS CLI, like this:

$ maas 19-root zones create name=zone1 description='First MAAS zone, using maas-zone1-sw.maas (TP-LINK TL-SG1024DE 24-port switch)' $ maas 19-root zones create name=zone2 description='Second MAAS zone, using maas-zone2-sw.maas (D-LINK DSG-1100-08 8-port switch)'

The same can be done from the MAAS web UI – click on “Zones” in the header, then click “Add zone”. Description is optional, but why not use it for clarity? When done, you can list all zones to verify and should get the same output as below:

$ maas 19-root zones read

Success.

Machine-readable output follows:

[

{

"resource_uri": "/MAAS/api/1.0/zones/default/",

"name": "default",

"description": ""

},

{

"resource_uri": "/MAAS/api/1.0/zones/zone1/",

"name": "zone1",

"description": "First MAAS zone, using maas-zone1-sw.maas (TP-LINK TL-SG1024DE 24-port switch)"

},

{

"resource_uri": "/MAAS/api/1.0/zones/zone2/",

"name": "zone2",

"description": "Second MAAS zone, using maas-zone2-sw.maas (D-LINK DSG-1100-08 8-port switch)"

}

]

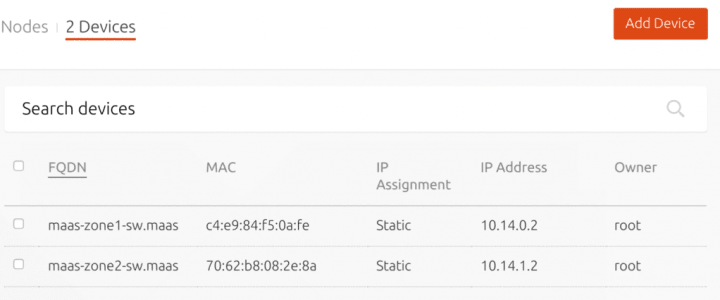

So what are those 2 hostnames I mentioned above? MAAS can provide DHCP and DNS services to other things, not just nodes it manages, but also other devices on the network – like switches, Apple TVs, containers, etc. While not required, I decided it’s nice add the two switches as devices in MAAS, with the following CLI commands (or by clicking “Add Device” on the “Nodes” page):

$ maas hw-root devices new hostname=maas-zone1-sw mac_addresses=c4:e9:84:f5:0a:fe $ maas hw-root devices new hostname=maas-zone2-sw mac_addresses=70:62:b8:08:2e:8a

Now the nodes UI page will show “0 Nodes | 2 Devices”, and clicking on the latter you can this nice summary:

Adding and Commissioning All Nodes

OK, now we’re ready to add the nodes. Assuming all NUCs, switches, and MAAS itself are plugged in as described in the diagram in the beginning, the process is really simple: just turn them on! I’d recommend to do that one node at a time for simplicity (i.e. you know which one is on currently, which can be tricky when more than one NUC is on – you have to match MAC addresses to distinguish between them).

What happens during the initial enlistment, in brief:

- MAAS will detect the new node while it’s trying to PXE boot.

- An ephemeral image will be given to the node, and during the initial boot some hardware information will be collected (takes a couple of minutes).

- When done, the node will shut down automatically.

- A node with status New will appear with a randomly generated hostname (quite funny sometimes).

You can then (from the UI or CLI) finish the node config and get it commissioned (so it’s ready to use):

- Accept the node and fill in the power settings to use, change the hostname, and set the zone.

- Once those changes are saved, MAAS should automatically commission the node (takes a bit longer and involves at least 2 reboots – again, node shuts down when done).

- During commissioning MAAS discovers the rest of the machine details, including hardware specs, storage, and networking.

- Unless commissioning fails, the node should transition from Commissioning to Ready.

A lot more details can be found in the MAAS Documentation. I’ll be using the web UI for the next steps, but of course they can also be done via the CLI, following the documentation. I’m happy to include those CLI steps later, if someone asks about them.

Remember the steps we did in an earlier post – Preparing the NUCs for MAAS ? Now we need the IP and MAC addresses for each NUC’s on-board NIC and the password set in the Intel MEBx BIOS. Revisiting Dustin’s “Everything you need to know about Intel” blog post will help a lot should you need to redo or change AMT settings.

Here is a summary of what to change for each node, in order (i.e. from the UI, edit the New node and first set the hostname, save, then zone – save again, finally set the power settings).

| Node # | Hostname | Zone | Power Type | MAC Address | Power Password | Power Address |

|---|---|---|---|---|---|---|

| 1 | node-11.maas | zone1 | Intel AMT | on-board NIC’s MAC address | (as set in Intel MEBx) | 10.14.0.11 |

| 2 | node-12.maas | zone1 | Intel AMT | on-board NIC’s MAC address | (as set in Intel MEBx) | 10.14.0.12 |

| 3 | node-21.maas | zone2 | Intel AMT | on-board NIC’s MAC address | (as set in Intel MEBx) | 10.14.1.21 |

| 4 | node-22.maas | zone2 | Intel AMT | on-board NIC’s MAC address | (as set in Intel MEBx) | 10.14.1.22 |

NOTE: While editing the node from the UI, MAAS can show a warning / suggestion you need to install wsmancli package on the cluster controller machine in order to be able to use AMT. Verify the amttool (which MAAS uses to power AMT nodes on/off) binary exists, if not – install it with $ sudo apt-get install wsmancli.

Once all 4 nodes are ready, your “Nodes” page in the UI should look very much like this:

Now we can let MAAS can deploy each node (one by one or all at once – doesn’t matter) to verify it works. From the UI, click on the checkbox next to FQDN to select all nodes, then from the “Take action” drop down menu (that just replaced the “Add Hardware” button) pick Deploy, choose series/kernel (defaults are OK) and click “Go”. Watch as nodes “come to life” and the UI auto-updates. It should take no more than 10-15 m for a node to get from Deploying to Deployed (unless an issue occurs). Try to SSH into each node (username “ubuntu”, and eth0’s IP address as seen in the node details page), check external connectivity, DNS resolution, pinging MAAS, both switches, other nodes, etc.

Setting up Nodes Networking

Now that all your nodes can be deployed successfully, we need to change the network interfaces on each node to make them usable for hosting the needed OpenStack components.

As described in the first post, 3 of the nodes will host nova-compute units (with ntp and neutron-openvswitch as subordinates), and collocated ceph units. The remaining node will hosts neutron-gateway (with ntp as a subordinate), ceph-osd, and is also used for the Juju API server. The rest of the OpenStack services are deployed inside LXC containers, distributed across the 4 nodes.

We can summarize each node’s connectivity requirements (i.e. to which subnet each NIC should be linked to and what IP address to use) in the following matrix:

| Space | default | public-api | internal-api | admin-api | storage-data | compute-data | storage-cluster | compute-external |

| VLAN ID | untagged (0) | 50 | 100 | 150 | 200 | 250 | 30 | 99 |

|

Subnet / Node |

10.14.0.0/20 | 10.50.0.0/20 | 10.100.0.0/20 | 10.150.0.0/20 | 10.200.0.0/20 | 10.250.0.0/20 | 10.30.0.0/20 | 10.99.0.0/20 |

| node-11 | eth0 10.14.0.111 |

eth0.50 10.50.0.111 |

eth0.100 10.100.0.111 |

eth0.150 10.150.0.111 |

eth0.200 10.200.0.111 |

eth0.250 10.250.0.111 |

X | eth1 IP unused |

| node-12 | eth0 10.14.0.112 |

eth0.50 10.50.0.112 |

eth0.100 10.100.0.112 |

eth0.150 10.150.0.112 |

eth0.200 10.200.0.112 |

eth0.250 10.250.0.112 |

eth1 10.30.0.112 |

X |

| node-21 | eth0 10.14.1.121 |

eth0.50 10.50.1.121 |

eth0.100 10.100.1.121 |

eth0.150 10.150.1.121 |

eth0.200 10.200.1.121 |

eth0.250 10.250.1.121 |

eth1 10.30.1.121 |

X |

| node-22 | eth0 10.14.1.122 |

eth0.50 10.50.1.122 |

eth0.100 10.100.1.122 |

eth0.150 10.150.1.122 |

eth0.200 10.200.1.122 |

eth0.250 10.250.1.122 |

eth1 10.30.1.122 |

X |

Since the I found some issues while using the web UI to configure node NICs, I recommend using the CLI instead for the remaining steps, which roughly are:

- Starting from the basic dual-NIC config, post-commissioning MAAS creates 2 physical NICs for each node.

- Additionally, on each node we need to create as many VLAN NIC as specified above.

- For node-11 in particular, we need the second NIC eth1 to be linked to VLAN 99 and subnet 10.99.0.0/20 in the “compute-external” space. This is required for OpenStack Neturon Gateway to work as expected. No need to assign an IP address, as Neutron will ignore it.

- Finally, we need to link each NIC (physical or VLAN) to the subnet it needs to use and associate a static IP address for the NIC.

NOTE: Using statically assigned IP addresses for each NIC vs. auto-assigned addresses is not required, but I’d like to have less gaps to fill in and more consistency across the board.

TIP: In case you need to later redo the network config from scratch, the easiest way I found is to simply re-commission all nodes, which will reset any existing NICs except for the physical ones discovered (again) during commissioning.

We can create a VLAN NIC on a node with the following CLI command (taking the node’s system ID, and the MAAS IDs for the VLAN and parent NIC):

$ maas 19-root interfaces create-vlan <node-id> vlan=<vlan-maas-id> parent=<nic-maas-id>

NOTE: It’s confusing, but the “vlan=” argument expects to see the (database) ID of the VLAN (e.g. 5009 – all of these are >5000), NOT the VLAN “tag” (e.g. 200) as you might expect.

Unfortunately, we can’t use a prefixed reference for the vlan and parent arguments. It would’ve been a nicer experience, if the create-vlan CLI supported “vlan=vid:50” and “parent=name:eth0”. So we need to first list all VLANs in the “maas-management” fabric to get their IDs. And to do that, we need to also know the fabric ID. Once we have the VLAN IDs, we then need the IDs of both physical NICs of the node. To summarize the sequence of commands we need to run initially, in order to get all the VLAN IDs we need:

$ maas 19-root fabrics read # let's assume "maas-management" fabric has ID=1 $ maas 19-root vlans read 1 # let's assume VLAN #50 has ID=5050, VLAN #100 has ID=5100, etc.

Once we have these, on each node we run one or more of the following commands to set up each needed VLAN NIC and assign a static IP to it:

$ maas 19-root interfaces read node-1344f864-afc3-11e5-b374-001a4b79bef8 # let's assume eth0 has ID=1000, eth0 has ID=2000 $ maas 19-root interfaces create-vlan node-1344f864-afc3-11e5-b374-001a4b79bef8 vlan=5050 parent=100 # creates the eth0.50 on the node, returning its ID, e.g. 1050 $ maas 19-root interface link-subnet node-1344f864-afc3-11e5-b374-001a4b79bef8 eth0.50 subnet=cidr:10.50.0.0/20 mode=static ip_address=10.50.0.111 # assign the static IP 10.50.0.111 to eth0.50 we just created

See how much nicer to use is the link-subnet command, allowing you to use “eth0.50” instead of the NIC ID (e.g. 1050), and similarly “cidr:10.50.0.0/20” for the subnet instead of its ID ?

There is also unlink-subnet command, which requires the ID of the “link” to remove. All links of an interface are listed in the optional “links” attribute, part of the response to interface read <id|name> (or any other command whose response includes interface(s)). Finally, we can change the VLAN to which a given NIC is associated with interface update $NODE_ID $NIC_ID vlan=$VLAN_ID. We need to do that for each node’s second NIC, and then we need to also run link-subnet to assign the IP address we want to eth1 of the node.

NOTE: Running link-subnet more than once per NIC creates “aliases”, e.g. “eth1:1”. Additionally, running unlink-subnet with the ID of the only remaining link of the NIC surprisingly succeeds, but then MAAS (I guess to enforce referential integrity of the DB or something) auto-creates a link in mode “auto” to the PXE subnet. This means there’s NO WAY to change the mode or assigned IP address of an existing link on an interface with the CLI or the API it exposes. You can do it easily via the web UI though, as it “cheats” by having a hidden “link_id” argument to “link-subnet” to distinguish between “create a new link” and “update an existing link”. Unfortunately, since the web UI uses a websocket-based connection with separate API handlers, there is a disparity between the CLI/API features exposed by MAAS to other clients and the websocket API exposed to the UI webapp only. So the set of steps around configuring eth1 is unnecessarily complicated – change its VLAN, link the subnet to set the IP, unlink the first link we don’t need (which is likely using the PXE subnet in auto mode).

Now we can use the CLI commands described above to configure each node’s NICs as required (see the table in the beginning of this section). For simplicity, in the commands below we’ll use variables like $VLAN50_ID, $NODE11_ID, $NODE21_ETH0_ID, and $NODE22_ETH1_FIRST_LINK_ID to refer to any IDs we need to use. Those variables should make it easier to automate the steps using a script which pre-populates the IDs and the runs the steps.

node-11

$ maas 19-root interfaces create-vlan $NODE11_ID vlan=$VLAN50_ID parent=$NODE11_ETH0_ID $ maas 19-root interface link-subnet $NODE11_ID eth0.50 subnet=cidr:10.50.0.0/20 mode=static ip=10.50.0.111 $ maas 19-root interfaces create-vlan $NODE11_ID vlan=$VLAN100_ID parent=$NODE11_ETH0_ID $ maas 19-root interface link-subnet $NODE11_ID eth0.100 subnet=cidr:10.100.0.0/20 mode=static ip=10.100.0.111 $ maas 19-root interfaces create-vlan $NODE11_ID vlan=$VLAN150_ID parent=$NODE11_ETH0_ID $ maas 19-root interface link-subnet $NODE11_ID eth0.150 subnet=cidr:10.150.0.0/20 mode=static ip=10.150.0.111 $ maas 19-root interfaces create-vlan $NODE11_ID vlan=$VLAN200_ID parent=$NODE11_ETH0_ID $ maas 19-root interface link-subnet $NODE11_ID eth0.200 subnet=cidr:10.200.0.0/20 mode=static ip=10.200.0.111 $ maas 19-root interfaces create-vlan $NODE11_ID vlan=$VLAN250_ID parent=$NODE11_ETH0_ID $ maas 19-root interface link-subnet $NODE11_ID eth0.250 subnet=cidr:10.250.0.0/20 mode=static ip=10.250.0.111 # ensure eth1 is on VLAN 99 as needed by Neutron $ maas 19-root interface update $NODE11_ID $NODE11_ETH1_ID vlan=$VLAN99_ID # CLI limitation: you can't update an existing link and also # you can't configure with mode=link_up (unconfigured) if ANY # other link with mode=auto|static exists. $ maas 19-root interface link-subnet $NODE11_ID eth1 subnet=cidr:10.99.0.0/20 mode=static ip_address=10.99.0.111 $ maas 19-root interface unlink-subnet $NODE11_ID eth1 id=$NODE11_ETH1_FIRST_LINK_ID

node-12

$ maas 19-root interfaces create-vlan $NODE12_ID vlan=$VLAN50_ID parent=$NODE12_ETH0_ID $ maas 19-root interface link-subnet $NODE12_ID eth0.50 subnet=cidr:10.50.0.0/20 mode=static ip=10.50.0.112 $ maas 19-root interfaces create-vlan $NODE12_ID vlan=$VLAN100_ID parent=$NODE12_ETH0_ID $ maas 19-root interface link-subnet $NODE12_ID eth0.100 subnet=cidr:10.100.0.0/20 mode=static ip=10.100.0.112 $ maas 19-root interfaces create-vlan $NODE12_ID vlan=$VLAN150_ID parent=$NODE12_ETH0_ID $ maas 19-root interface link-subnet $NODE12_ID eth0.150 subnet=cidr:10.150.0.0/20 mode=static ip=10.150.0.112 $ maas 19-root interfaces create-vlan $NODE12_ID vlan=$VLAN200_ID parent=$NODE12_ETH0_ID $ maas 19-root interface link-subnet $NODE12_ID eth0.200 subnet=cidr:10.200.0.0/20 mode=static ip=10.200.0.112 $ maas 19-root interfaces create-vlan $NODE12_ID vlan=$VLAN250_ID parent=$NODE12_ETH0_ID $ maas 19-root interface link-subnet $NODE12_ID eth0.250 subnet=cidr:10.250.0.0/20 mode=static ip=10.250.0.112 # eth1 needs to be on VLAN 30 and subnet 10.30.0.0/20, used for ceph cluster replication. $ maas 19-root interface update $NODE12_ID $NODE12_ETH1_ID vlan=$VLAN30_ID # eth1 needs to be on VLAN 30 and subnet 10.30.0.0/20, used for ceph cluster replication. $ maas 19-root interface update $NODE12_ID $NODE12_ETH1_ID vlan=$VLAN30_ID $ maas 19-root interface link-subnet $NODE12_ID eth1 subnet=cidr:10.30.0.0/20 mode=static ip_address=10.30.0.112 $ maas 19-root interface unlink-subnet $NODE12_ID eth1 id=$NODE12_ETH1_FIRST_LINK_ID

node-21

$ maas 19-root interfaces create-vlan $NODE21_ID vlan=$VLAN50_ID parent=$NODE21_ETH0_ID $ maas 19-root interface link-subnet $NODE21_ID eth0.50 subnet=cidr:10.50.0.0/20 mode=static ip=10.50.1.121 $ maas 19-root interfaces create-vlan $NODE21_ID vlan=$VLAN100_ID parent=$NODE21_ETH0_ID $ maas 19-root interface link-subnet $NODE21_ID eth0.100 subnet=cidr:10.100.0.0/20 mode=static ip=10.100.1.121 $ maas 19-root interfaces create-vlan $NODE21_ID vlan=$VLAN150_ID parent=$NODE21_ETH0_ID $ maas 19-root interface link-subnet $NODE21_ID eth0.150 subnet=cidr:10.150.0.0/20 mode=static ip=10.150.1.121 $ maas 19-root interfaces create-vlan $NODE21_ID vlan=$VLAN200_ID parent=$NODE21_ETH0_ID $ maas 19-root interface link-subnet $NODE21_ID eth0.200 subnet=cidr:10.200.0.0/20 mode=static ip=10.200.1.121 $ maas 19-root interfaces create-vlan $NODE21_ID vlan=$VLAN250_ID parent=$NODE21_ETH0_ID $ maas 19-root interface link-subnet $NODE21_ID eth0.250 subnet=cidr:10.250.0.0/20 mode=static ip=10.250.1.121 # eth1 needs to be on VLAN 30 and subnet 10.30.0.0/20, used for ceph cluster replication. $ maas 19-root interface update $NODE21_ID $NODE21_ETH1_ID vlan=$VLAN30_ID $ maas 19-root interface link-subnet $NODE21_ID eth1 subnet=cidr:10.30.0.0/20 mode=static ip_address=10.30.1.121 $ maas 19-root interface unlink-subnet $NODE21_ID eth1 id=$NODE21_ETH1_FIRST_LINK_ID

node-22

$ maas 19-root interfaces create-vlan $NODE22_ID vlan=$VLAN50_ID parent=$NODE22_ETH0_ID $ maas 19-root interface link-subnet $NODE22_ID eth0.50 subnet=cidr:10.50.0.0/20 mode=static ip=10.50.1.122 $ maas 19-root interfaces create-vlan $NODE22_ID vlan=$VLAN100_ID parent=$NODE22_ETH0_ID $ maas 19-root interface link-subnet $NODE22_ID eth0.100 subnet=cidr:10.100.0.0/20 mode=static ip=10.100.1.122 $ maas 19-root interfaces create-vlan $NODE22_ID vlan=$VLAN150_ID parent=$NODE22_ETH0_ID $ maas 19-root interface link-subnet $NODE22_ID eth0.150 subnet=cidr:10.150.0.0/20 mode=static ip=10.150.1.122 $ maas 19-root interfaces create-vlan $NODE22_ID vlan=$VLAN200_ID parent=$NODE22_ETH0_ID $ maas 19-root interface link-subnet $NODE22_ID eth0.200 subnet=cidr:10.200.0.0/20 mode=static ip=10.200.1.122 $ maas 19-root interfaces create-vlan $NODE22_ID vlan=$VLAN250_ID parent=$NODE22_ETH0_ID $ maas 19-root interface link-subnet $NODE22_ID eth0.250 subnet=cidr:10.250.0.0/20 mode=static ip=10.250.1.122 # eth1 needs to be on VLAN 30 and subnet 10.30.0.0/20, used for ceph cluster replication. $ maas 19-root interface update $NODE22_ID $NODE22_ETH1_ID vlan=$VLAN30_ID $ maas 19-root interface link-subnet $NODE22_ID eth1 subnet=cidr:10.30.0.0/20 mode=static ip_address=10.30.1.122 $ maas 19-root interface unlink-subnet $NODE22_ID eth1 id=$NODE22_ETH1_FIRST_LINK_ID

We can verify the steps works by listing all NICs of each node with $ maas 19-root interfaces read $NODE##_ID, or with a quick look in the web UI.

Next Steps: Advanced networking with Juju (on MAAS)

Whew… quite a few steps needed to configure MAAS nodes for complex networking scenarios. Automating those with scripts seems the obvious solution, and in fact I’m working on a generalized solution, which I’ll post about soon, when it’s in a usable state.

We now have the nodes configured the way we want them to be, in the following post we’ll take a deeper look into how to orchestrate the deployment of OpenStack on this MAAS with Juju. You’ll have a sneak peak into the advanced networking features coming to Juju 2.0 (in time for Ubuntu 16.04 release). Stay tuned, and thanks for reading so far!